- Home (US)

- Knowledge Center

- Blog

- Enabling the “Data-Driven Organization" - The Vital Importance of Governance and Master Data Management

Enabling the “Data-Driven Organization" - The Vital Importance of Governance and Master Data Management

In my previous blog post entitled “Enabling the Data-Driven Organization: An Introduction to Enterprise Information Management,” I discussed the eight people, process, and technology components that must be established for organizations to become truly data-driven. The overarching goal of the data-driven organization is to manage information as a strategic corporate asset. The fundamental prerequisites for attaining this goal are the appropriate organizational structures, business processes, and tools for Governance, Data Governance and Master Data Management (MDM). This edition covers these three vitally important Enterprise Information Management (EIM) disciplines, as well as best practices and lessons learned.

Governance

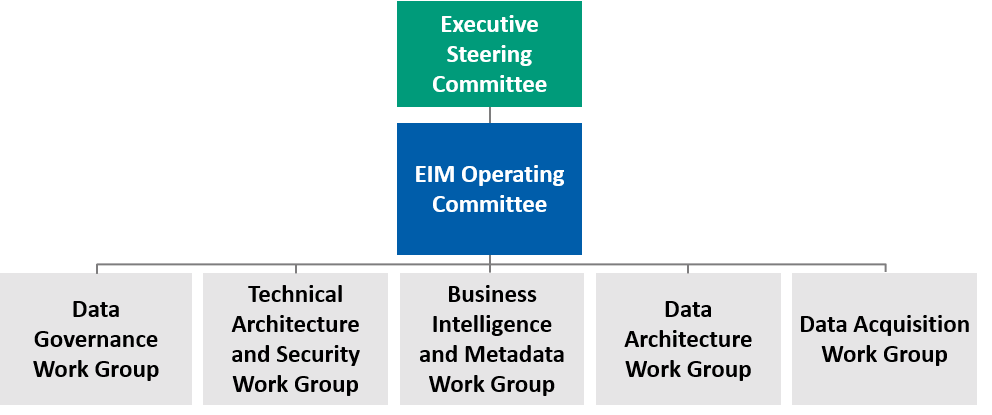

Governance includes C-suite sponsorship of the organization’s EIM initiative with support from a blended team of business and IT stakeholders who establish priorities, obtain funding, eliminate roadblocks, and monitor implementation progress. A best practice organizational structure is depicted in Figure 1.

Figure 1: Best Practice Governance Structure

An important first step in establishing an EIM initiative is to engage one or more “EIM Champions” who currently participate in one of the organization’s Executive Steering Committees. These should be C-Suite executives who will serve as an EIM executive champion and continually communicate its strategic importance throughout the enterprise. In a health care organization, EIM champions are often the CMO and CIO.

As important as C-Suite sponsorship is to the successful implementation of an EIM initiative, the lynchpin is, without doubt, the EIM Operating Committee. This Governance body is composed of VP or director-level information stakeholders from across the organization with a charter to prioritize and fund projects, develop communication plans, mitigate risks, and monitor progress. Of course, the EIM Operating Committee also requires support from a variety of EIM workgroups with expertise in domains, such as data architecture, technical architecture, data acquisition, metadata management, and business intelligence. The Data Governance Work Group is discussed in the next section of this blog post.

Every single successful EIM implementation I have seen in my 20 years of managing data warehouse, business intelligence, and data governance engagements had an effective EIM Operating Committee. Every failed initiative had either no such governance body or an ineffective and disengaged one.

Data Governance

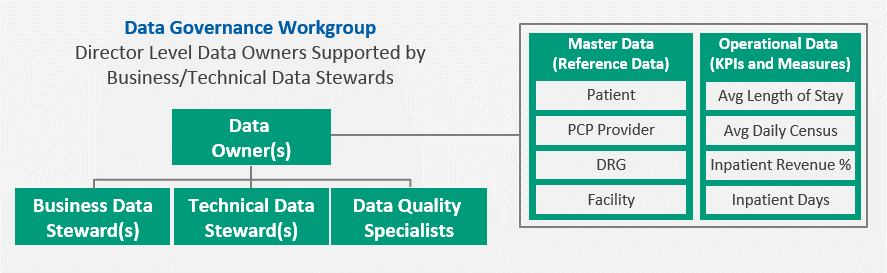

The EIM Operating Committee’s Data Governance Work Group is composed of Data Owners with accountability for one or more types of enterprise-wide reference data, or Master Data. Operational Data, such as Key Performance Indicators (KPIs) or other business measures are typically managed collaboratively by multiple Data Owners. It is important to note that Data Owners, who are often director-level information stakeholders, are supported by Business and Technical Data Stewards to create data definitions, identify and resolve data quality issues, and propose algorithms for computing KPIs.

A best practice organizational structure for a Data Governance Work Group is demonstrated in Figure 2.

Figure 2: Best Practice Data Governance Work Group

The Data Governance Work Group has three key objectives to ensure greater accountability for information quality and more consistent definitions and business rules for information management. These objectives are to:

- Proactively Identify Issues: Data quality issues are often identified “upstream” when an executive or stakeholder questions the information contained in a report or dashboard. Extensive effort is then required to trace back to the root cause of the issue, only to find that data was entered incorrectly or that it was transformed in some way using invalid business rules. An effective data governance program includes methodologies to identify data quality issues before they become visible and costly.

- Reactively Resolve Issues: Many organizations have weak business processes in place to remediate data quality issues. Far too often, the Information Technology (IT) department is held accountable, when the actual causes of the problem are poorly defined business rules, inconsistent data definitions, or undocumented and unapproved workflows. Data governance ensures that well-documented workflows are established, and business stakeholders are held responsible for data quality with support from IT.

- Enforce Standards: Many data quality issues are caused by the lack of consistent data definitions, algorithms, and business rules. Data governance establishes roles and responsibilities to ensure consistency of data management standards, which helps improve this invaluable “metadata.” This also leads to end-users having a much higher level of confidence in the information they use to make business decisions.

Master Data Management (MDM)

It is easy to see how effectively managing even a portion of the organization’s inventory of Master Data types could become a daunting task. Fortunately, a class of tools and methodologies is available to facilitate this significant data integration effort. A comprehensive discussion of the features of MDM tools is beyond the scope of this blog, but I would like to stress the importance of determining an MDM approach at the beginning of any data governance initiative.

Before you define your functional and technical requirements for an MDM tool and possibly create an RFP, you should first decide whether you should take an operational approach to MDM or an analytic approach. The analytic MDM approach focuses on downstream data in the data warehouse, or more typically, in what is known as dimensional data marts. The operational approach to MDM focuses on upstream master data in the source systems. This latter approach is considered best practice by CTG. However, the key point to remember is that it is very difficult to change direction later in the MDM implementation.

In part three of this blog series, I will discuss Data Architecture, Data Acquisition, and Technical Architecture.

AUTHOR

John Walton

Client Solution Architect

John Walton is a CTG Client Solution Architect and consulting professional with more than 35 years of IT experience spanning multiple disciplines and industries. He has more than 20 years of experience leading data warehousing, business intelligence, and data governance engagements. He has extensive experience working with a broad range of healthcare and life sciences organizations including IDNs, national healthcare payers, regional HMOs, a global pharmaceutical company, academic medical centers, community, and pediatric hospitals.

-

Blog

Fueling the Energy Evolution: Seven Digital Priorities Energy Leaders Must Embrace in 2025

-

Case Study

Tech Services Provider: Long-Term Staffing Partner Success

-

White Paper

Optimizing the Epic Journey: Workflow Alignment as the Cornerstone of EHR Success

-

Case Study

Energy Organization: Strengthening Compliance

Let’s discuss

How CTG can help you achieve your desired business outcomes through digital transformation.

Send us a short message by completing the contact form and we’ll respond as soon as possible, or call us directly.

Looking for a job?

We’re always on the lookout for great people who share our commitment to enabling our clients’ transformations.

Social media cookies must be enabled to allow sharing over social networks.